Hint: Use the Konami Code

You Found Me!

I was born and raised in Olympia, Washington. I studied at Carnegie Mellon University, graduating with a B.S. in Computer Science in May 2020.

I am currently searching for my next opportunity. Get in touch!

Outside of work, I enjoy spending time with friends, reading, rock climbing, and playing tennis. I also love to travel, explore, and learn.

September 2016

This was the beginning of my hackathon career.

The idea for a robotic ramen machine actually came about before I entered college.

Over the summer, I was direct messaging my future roommate, and we were fantasizing

how great an automatic ramen machine would be. Ramen is the quintessential college

meal, and since we all know we're too busy studying, a ramen robot seems necessary

for a college student's life. A few weeks into college, we heard about CMU's

beginner hackathon HackCMU. This would be the perfect opportunity to build this!

We bought wood, scrapped together cardboard and duct tape, and found an arduino, and

the rest is history. We successfully created an internet of things robotic ramen

preparer controlled by an iPhone app. Bob's Ramen won the Mentor's Choice Award

sponsored by Microsoft.

Check it out

February 2017

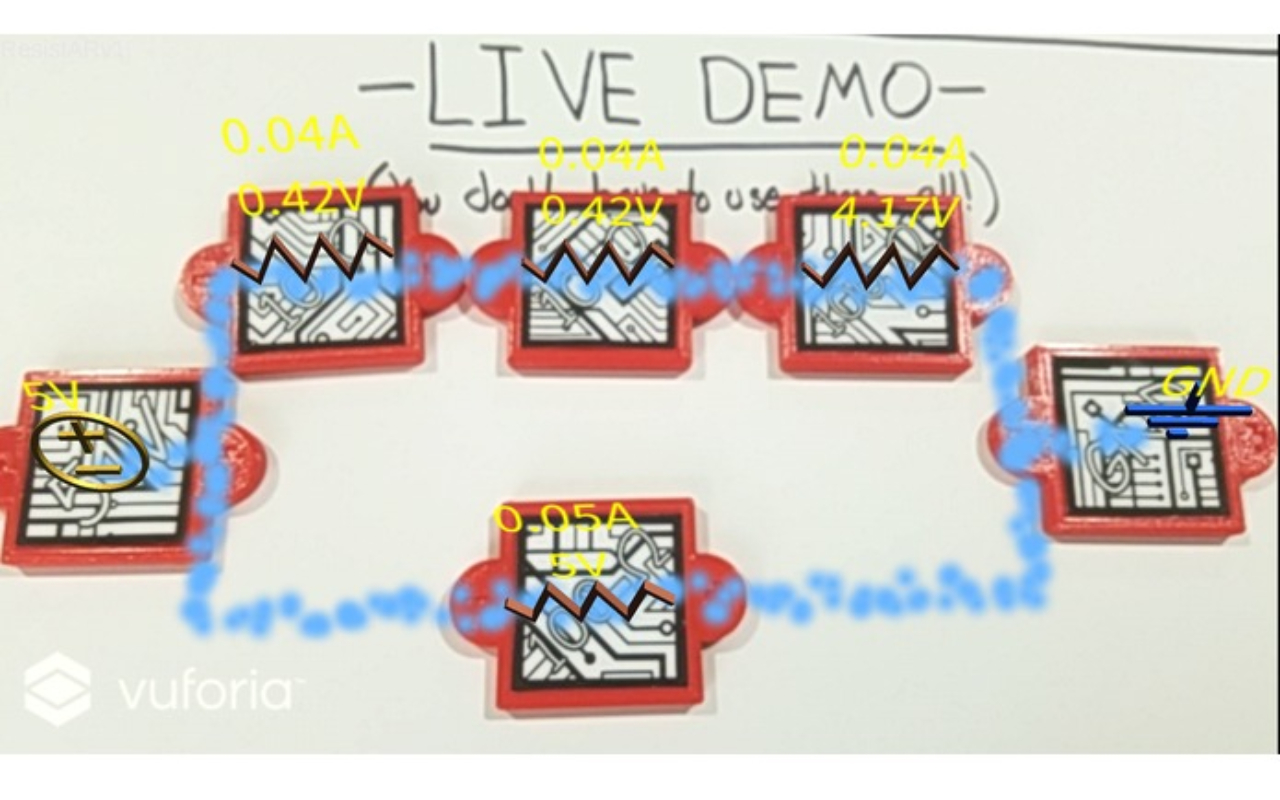

When you're at a hackathon with 3 other ECE (electrical and computer engineering)

majors you shouldn't be surprised if your project is about resistors. Our idea was

to design 3D printed parts representing circuit components (source, ground, resistor),

and then make an app that would visualize the circuit flow through a given circuit

configuration. None of us had experience with augmented reality, but we decided to

go for the challenge. The project would also have educational applications. We

happened upon the Vuforia SDK which could be coupled with Unity to make augmented

reality apps using QR codes as targets. I primarily worked on the algorithms which

outputted the electron flow direction and locations as well as the solved resistor

potential differences and electric current. We made ResistAR at TartanHacks, Carnegie

Mellon's premiere hackathon and won the grand prize.

Check it out

September 2017

Inventex is an internet-enabled hardware sorter and inventory maintenance machine.

It works with a mobile app built in React Native. I was in charge of making the

mobile app and configuring the raspberry pi to take incoming commands. The problem

it was aimed towards was messy workspaces. With Inventex, you can scan the piece of

hardware that you want to desposit with the app, then put it in the machine's input

slot. The machine then allocates a bin for that category of hardware and deposits

the hardware in it. When you want to retrieve something, you can select the

hardware type and amount from the app interface and the machine will spit it out for

you. We made Inventex at PennApps, and we won the best hardware hack award.

Check it out

November 2017

At the Facebook Global Hackathon, my team and I created something called Facebook Discourse. A bit of background about the Facebook Global Hackathon: every year Facebook invites the winners of a handful of hackathons from across the globe. When I participated, there were 20 teams from 11 different countries. I qualified because my team won the grand prize at TartanHacks. Some other hackathons from the US that are represented are PennApps, HackMIT, and TreeHacks (Stanford). Facebook Discourse is a debate platform that fosters positive discourse by only allowing positive interactions from spectators like up-voting debate points and also adding credible sources. Additionally, users can follow debate threads that are automatically detected by the system. This could be used with presidential debates by visualizing the arguments on our platform. We presented Facebook Discourse to the VPs of technology of OculusVR, WhatsApp, and Instagram and won first prize.

January 2018

At PennApps, my team and I made Modware, a modular hardware prototyping kit for the

software engineer to take the "hard" out of hardware. Modware is a magnetic board

with magnetically attachable hardware components such as light switches, knobs, and

lights. The idea is that with Modware, the user would be able to easily hook up

hardware components to anything they might need without having to know the details

of electrical engineering. Modware also includes a UI to connect

the components to each other and configure APIs with the hardware components.

One of the examples we set up was using a knob to control the depth of a Sierpinski's

triangle rendering. A software engineer could also make a light flash to alert

them of something like a stock hitting a certain price. I personally worked on the

backend design including data structures and data passing and also the examples of

how Modware can be used by a software engineer. Modware earned us four awards: the

PennApps 2nd place prize, Lutron's IOT award, Best Hardware Hack, and Hacker's

favorite award.

Check it out

August 2018

I created this website with raw html, css, and javascript. I had only

a loose understanding of how css works from my experience with react

and react native, so I decided to get more experience by making this

website. The only third party code that I used was for the projects

picker widget below and also for the tool to register keyboard input

for the easter egg game you can play if you click on the fox at the

very bottom of this page (the game is not available on mobile).

Check it out

September 2018

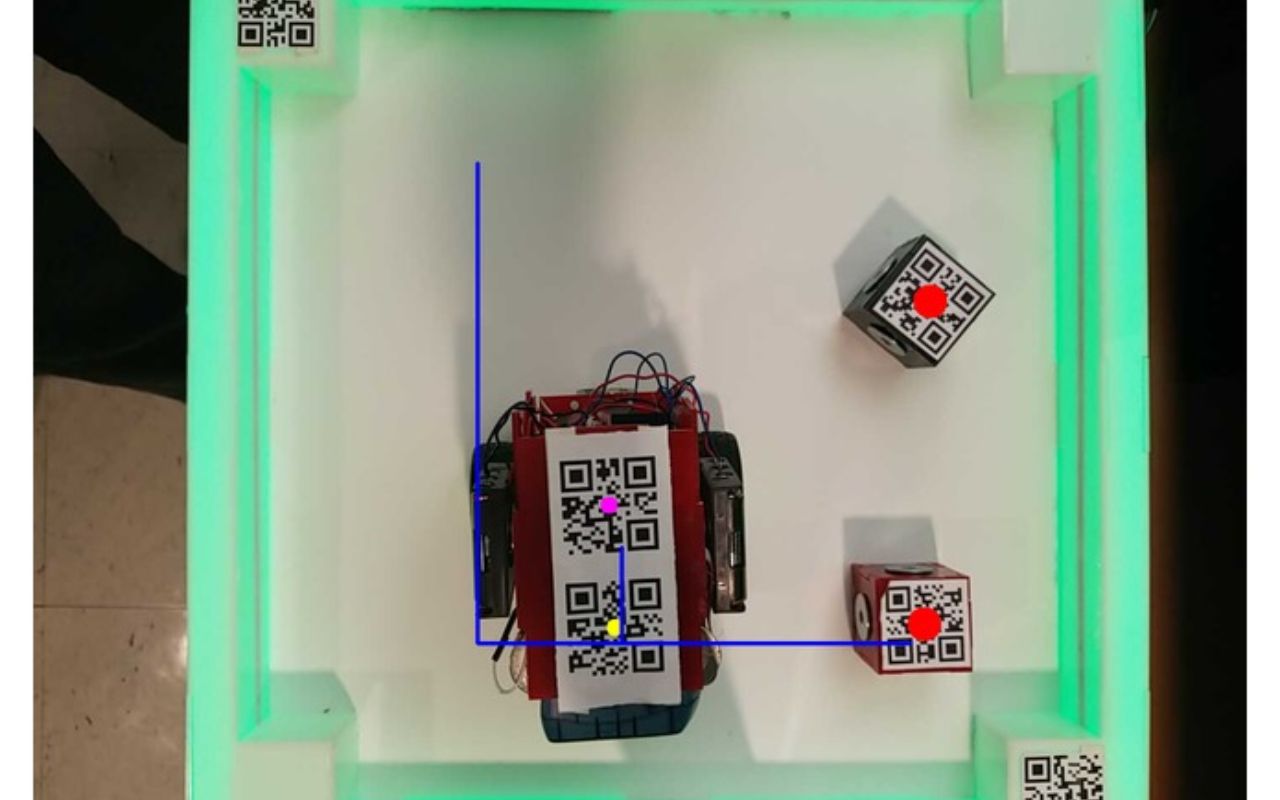

The Simon System consists of Simon, our robot that learns to perform the human's input

actions. There are two "play" fields, one for the human to perform actions and the other

for Simon to reproduce actions. Everything starts with a human action. The Simon System

detects human motion and records what happens. Then those actions are interpreted into

actions that Simon can take. Then Simon performs those actions in the second play field,

making sure to plan efficient paths taking into consideration that it is a robot in the

field. I implemented the computer vision, path planning, robot feedback control, and

communication between components of the project. The computer vision systems intelligently

detects when updates are made to the human control field and acquires normalized grid

size of the play field using QR boundaries. The robot's orientation is also corrected

using information from the computer vision. The path planning system uses a modified

BFS algorithm taking into account path smoothing with realtime updates from the

low-level controls to calibrate path plan throughout execution. Communication

throughout the project uses bluetooth and unix sockets.

Check it out

~See all my projects~

At Cruise, I owned the Cruise ML runtime which executes all 50+ ML models in the autonomous vehicle stack. I oversaw cross functional feature prioritization, technical support, and knowledge sharing.

I lead three engineers to deliver a solution to centralize metadata/metrics of deployed ML models at Cruise. The project was conceived at an internal hackathon where it won two awards: People's Choice and Best Demo.

I mentored an intern to enable specification of max size of dynamic dimensions in the ML runtime.

I coordinated weekly team deep dives.

I wrote performant C++/CUDA programs which implement perception algorithms including mean shift clustering and voxelization to unblock ML model deployment.

I optimized memory usage in the Cruise ML runtime saving over 1GB in GPU memory (~5% improvement) plus more in future model deployments by adding static buffer sharing capabilities.

I decoupled PyTorch from the ML runtime to improve on road software stability.

I investigated SYCL as a candidate cross platform HPC programming solution.

At Cruise, I investigated and adapated deep learning compiler technologies for machine learning inference on the vehicle. This involved getting familiar with Cruise's perception models, learning the details of the technology I am dealing with, making use of profiling tools to gain more insight into inference performance, and adding features for Cruise's needs.

I also worked on the Cruise ML runtime to unify and streamline the deployment of models onto the autonomous vehicle.

This is Carnegie Mellon's introductory machine learning course targeted at Master's students. It covers all or most of: concept learning, decision trees, neural networks, linear learning, active learning, estimation & the bias-variance tradeoff, hypothesis testing, Bayesian learning, the MDL principle, the Gibbs classifier, Naive Bayes, Bayes Nets & Graphical Models, the EM algorithm, Hidden Markov Models, K-Nearest-Neighbors and nonparametric learning, reinforcement learning, bagging, boosting and discriminative training. My responsibilities included include leading recitations and designing assignments and tests.

Aug.At Aurora I built the core communication system between the self driving cars and the fleet monitoring dashboard. I worked with Qt, nginx, Flask, React, and Redux. My software was promptly adopted into the Vehicle Operations team's daily workflow. (The Vehicle Operations team is in charge of carrying out tests on the vehicles).

During my internship, I also added hyperparameter tuning capabilities to the perception training script and created a tool to plot in Google Earth the global poses of perception training data along with their properties. For these projects I used Tensorflow, Python, and C++.

This is a course about fundamental computing principles for students with minimal or no computing background. It teaches programming constructs and also covers history and applications of computing. My responsibilities included leading recitation, conducting office hours, grading assignments/tests, and discussing how to improve the course. I spent around 8-10 hours a week on this.

Aug.I worked on this research project at the Carnegie Mellon Center for Machine Learning and Health. GenAMap is a visual machine learning platform for genome-wide association studies. I primarily designed and implemented systems for transferring data between the backend and frontend. I used MongoDB, Node.js, Node.js C++ Addons, React, and Redux.

Jun.See description above.

Jan.last updated June 23, 2023.